What is Confusion matrix, Accuracy, Precision, Recall and F1 score | Easy to Understand Details in 3 min

- Intro

- What is confusion matrix

- What is accuracy, precision, recall and F1 score

- How to choose the appropriate performance metric by case

- Common mistake with accuracy

- Conclusion

Intro

Have you ever found yourself uncertain about how to assess the performance of a model? In this article, I will clarify the concepts of the confusion matrix, accuracy, precision, recall, and F1 score, as well as when and why we use each of these evaluation metrics.

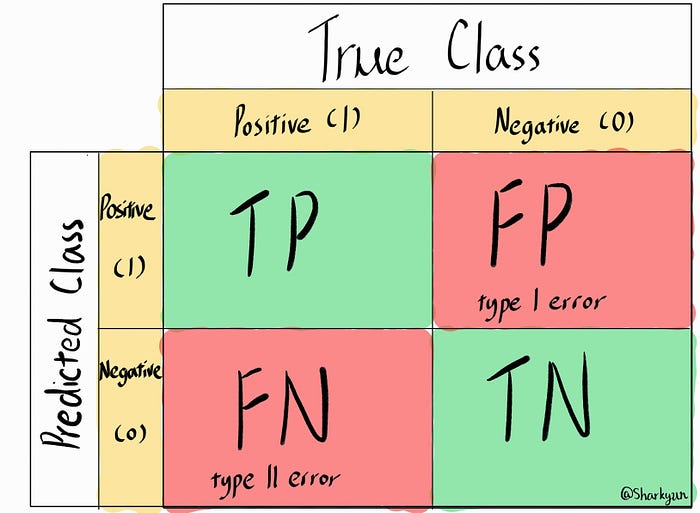

What is confusion matrix

A confusion matrix is a table used to evaluate the performance of a classification model by comparing its predictions to the actual ground truth labels. It provides a summary of the model’s true positive (TP), true negative (TN), false positive (FP), and false negative (FN) predictions for each class in a multi-class classification problem or for the positive class in a binary classification problem.

What is accuracy, precision, recall and F1 score

From the confusion matrix, several performance metrics can be derived to assess the model’s performance, which are the accuracy, precision, recall and F1 score:

- Accuracy: Accuracy measures the overall correctness of the model’s predictions. It is calculated as the ratio of the correctly predicted instances (TP + TN) to the total number of instances in the dataset. High accuracy is desirable, but it can be misleading when dealing with imbalanced datasets.

Accuracy = (TP + TN) / (TP + TN + FP + FN)

- Precision: Precision measures the proportion of true positive predictions among the instances predicted as positive. It is useful when the cost of false positives is high, and you want to minimize the number of false positives.

Precision = TP / (TP + FP)

- Recall (Sensitivity or True Positive Rate): Recall measures the proportion of true positive predictions among all instances that are actually positive. It is useful when the cost of false negatives is high, and you want to minimize the number of false negatives.

Recall = TP / (TP + FN)

- F1 Score: The F1 score is the harmonic mean of precision and recall, providing a balanced metric for situations where both precision and recall are important. It helps avoid favoring models that perform well in only one of these metrics.

F1 Score = 2 * (Precision * Recall) / (Precision + Recall)

How to choose the appropriate performance metric by case

Choosing the appropriate metric depends on the specific characteristics of your dataset and the business goals of the problem you are trying to solve:

- Accuracy: Use accuracy when the classes are balanced, and you want a general sense of how well the model is performing overall.

For example, in credit risk assessment, accuracy is a useful metric when evaluating a model’s performance. A balanced accuracy score gives a general sense of how well the model predicts both creditworthy and high-risk customers, contributing to informed lending decisions.

- Precision: Use precision when the cost of false positives is high and you want to minimize false alarms or false positive predictions. (minimizing false positives)

For example, in fraud detection, precision is crucial because it indicates how many flagged cases are actually true frauds, reducing the need for manual investigation of false positives.

- Recall: Use recall when the cost of false negatives is high, and you want to ensure that you capture as many positive instances as possible, even at the expense of some false positives. (minimizing false negatives)

For example, in medical diagnosis, a high recall rate is crucial because it means correctly identifying individuals with a disease, even if it leads to some false alarms.

- F1 Score: Use the F1 score when you want a balance between precision and recall and when there is an uneven class distribution (imbalanced dataset).

For example, in sentiment analysis of customer reviews, F1 score is a suitable metric when dealing with imbalanced sentiment classes. It helps strike a balance between correctly identifying positive and negative sentiment reviews, taking into account both precision and recall.

Common Mistake with accuracy

It is important to note that: in imbalanced datasets, accuracy may not be a reliable measure, as a high accuracy score could be driven by the model’s ability to predict the majority class accurately while performing poorly on the minority class. In such cases, precision, recall, or the F1 score can provide a more meaningful evaluation of the model’s performance.

For example: consider a cancer prediction scenario where only 3% of the data represents positive cases (cancer instances). Even if we use a non-training model that simply predicts all cases as negative (non-cancer), the accuracy would be 97%. However, this high accuracy can be misleading and less meaningful because the model fails to correctly identify positive cases, which are the critical ones in cancer prediction.

Solution: To address this issue, we prioritize the use of the recall metric. By focusing on minimizing false negatives, we ensure that the model correctly identifies as many positive instances (cancer cases) as possible. In the context of medical diagnosis, missing a true positive (a cancer case) can have serious consequences, leading to delayed or missed treatment. Therefore, optimizing for recall allows us to increase the sensitivity of the model and improve its ability to detect cancer cases effectively, even if it results in some false alarms (false positives).

Conclusion

Choosing the appropriate performance metrics is crucial, and it’s important to know whats the goal of the model that you aiming for. Precision is essential when the cost of false positives is high, recall is crucial when the cost of false negatives is high, and the F1 score strikes a balance between precision and recall in the presence of an imbalanced dataset.

In the next article, i will be explain how these metrics related with the AUC-ROC curve where the AUC-ROC curve is particularly useful when dealing with imbalanced datasets and complements the information provided by accuracy, precision, recall, and F1 score. Understanding how to interpret the AUC-ROC curve will further enhance our ability to assess and compare different models effectively. Stay tuned for an in-depth explanation in the upcoming article.

Reference: